(y, h = 10, drift =, level = ( 80, 95 ), fan =, lambda =, biasadj =, bootstrap =, npaths = 5000, x = y ) (y, h = 10, level = ( 80, 95 ), fan =, lambda =, biasadj =, bootstrap =, npaths = 5000, x = y ) (y, h = 2. (x ), level = ( 80, 95 ), fan =, lambda =, biasadj =, bootstrap =, npaths = 5000, x = y ) Arguments y a numeric vector or time series of class ts h Number of periods for forecasting drift Logical flag.

If TRUE, fits a random walk with drift model. Level Confidence levels for prediction intervals. Fan If TRUE, level is set to seq(51,99,by=3).

Sep 22, 2007 Re: Autocad 2015 Crash on StartUp with Mac OS X Not 2 times, but on two different machines. As you install AutoCAD on one machine, different releases, but with the same serial, this counts as 1 machine. Solved: adlmusersettings.xlm when i start autocad for mac. Solved: Hello to everyone, i just installed AutoCAD for MAC. All time when i start AutoCAD it make s AdlmUserSettings.xlm in Finder. How i can stop.

This is suitable for fan plots. Lambda Box-Cox transformation parameter. If lambda='auto', then a transformation is automatically selected using BoxCox.lambda. The transformation is ignored if NULL. Otherwise, data transformed before model is estimated. Biasadj Use adjusted back-transformed mean for Box-Cox transformations.

If transformed data is used to produce forecasts and fitted values, a regular back transformation will result in median forecasts. If biasadj is TRUE, an adjustment will be made to produce mean forecasts and fitted values. Bootstrap If TRUE, use a bootstrap method to compute prediction intervals. Otherwise, assume a normal distribution. Npaths Number of bootstrapped sample paths to use if bootstrapTRUE. X Deprecated.

Included for backwards compatibility. Details The random walk with drift model is Yt=c + Yt-1 + Zt where Zt is a normal iid error.

Forecasts are given by Yn+h=ch+Yn. If there is no drift (as in naive), the drift parameter c=0. Forecast standard errors allow for uncertainty in estimating the drift parameter (unlike the corresponding forecasts obtained by fitting an ARIMA model directly). The seasonal naive model is Yt=Yt-m + Zt where Zt is a normal iid error. Value An object of class ' forecast'.

The function summary is used to obtain and print a summary of the results, while the function plot produces a plot of the forecasts and prediction intervals. The generic accessor functions fitted.values and residuals extract useful features of the value returned by naive or snaive. An object of class 'forecast' is a list containing at least the following elements: model A list containing information about the fitted model method The name of the forecasting method as a character string mean Point forecasts as a time series lower Lower limits for prediction intervals upper Upper limits for prediction intervals level The confidence values associated with the prediction intervals x The original time series (either object itself or the time series used to create the model stored as object). Residuals Residuals from the fitted model.

That is x minus fitted values. Fitted Fitted values (one-step forecasts) Author(s) Rob J Hyndman See Also Examples.

Associative and Time Series Forecasting involves using past data to generate a number, set of numbers, or scenario that corresponds to a future occurrence. It is absolutely essential to short-range and long-range planning.

Time Series and Associative models are both quantitative forecast techniques are more objective than qualitative techniques such as the Delphi Technique and market research. Time Series Models Based on the assumption that history will repeat itself, there will be identifiable patterns of behaviour that can be used to predict future behaviour. Do Not Waste Your Time This model is useful when you have a short time requirement (eg days) to analyse products in their growth stages to predict short-term outcomes. To use this model you look at several historical periods and choose a method that minimises a chosen measure of error. Then use that method to predict the future. To do this you use detailed data by SKU’s (Stock Keeping Units) which are readily available.

In TSM there may be identifiable underlying behaviours to identify as well as the causes of that behaviour. The data may show causal patterns that appear to repeat themselves – the trick is to determine which are true patterns that can be used for analysis and which are merely random variations. The patterns you look for include: Trends – long term movements in either direction Cycles – wavelike variations lasting more than a year usually tied to economic or political conditions (eg gas prices have long term impact on travel trends) Seasonality – short-term variations related to season, month, particular day (eg Christmas sales, Monday trade etc) In addition there are causes of behaviour that are not patterns such as worker strikes, natural disasters and other random variations. Simple uses of this model include “naive” forecasting & averaging but both take little account of the variations and patterns. “Naive” forecast uses the actual demand for the past period as the forecasted demand for the next period on the assumption that the past will repeat and any trends, seasonality, or cycles are either reflected in the previous period’s demand or do not exist. Simple average – takes the average of some number of periods of past data by summing each period and dividing the result by the number of periods. (great for short term basic forecasting) Moving average takes a predetermined number of periods, sums their actual demand, and divides by the number of periods to reach a forecast.

For each subsequent period, the oldest period of data drops off and the latest period is added Weighted average applies a predetermined weight to each month of past data, sums the past data from each period then divides by the total of the weights. If the forecaster adjusts the weights so that their sum is equal to 1, then the weights are multiplied by the actual demand of each applicable period. The results are then summed to achieve a weighted forecast. Generally, the more recent the data is, the higher the weight. Weighted moving average this is a combination of weighted and moving average which assigns weights to a predetermined number of periods of actual data and computes the forecast the same way as moving average forecasts. As with all moving forecasts, as each new period is added, the data from the oldest period is discarded. Exponential smoothing is a more complex form of weighted moving average in which the weight falls off exponentially as the data ages.

This averaging technique takes the previous period’s forecast and adjusts it by a predetermined smoothing constant multiplied by the difference in the previous forecast and the demand that actually occurred during the previously forecasted period (called forecast error). Holt’s Model An extension of exponential smoothing used when time-series data exhibits a linear trend. This method is known by several other names: double smoothing; trend-adjusted exponential smoothing; forecast including trend.

A more complex form known as the Holt-Winter’s Model brings both trend and seasonality into the equation. This can be analysed using either the multiplicative or additive method. In the additive version, seasonality is expressed as a quantity to be added to or subtracted from the series average. For the multiplicative model seasonality is expressed as a percentage (seasonal relatives or seasonal indexes) of the average (or trend). These are then multiplied times values in order to incorporate seasonality. Associative Models Also known as “causal” models involve the identification of variables that can be used to predict another variable of interest.

They are based on the assumption that the historical relationship between “dependent” and”independent” variables will remain valid in future and each independent variable is easy to predict. This form of analysis can take several months and is used for medium-term forecasts for products in their growth or maturity phase. The procedure for this model is to collect several periods of history relating to the independent and dependent variables themselves, establish the relationship that minimizes mean squared error of forecast vs actual using linear or non-linear and singular or multiple regression analysis. So you first predict the independent variable, then look at the established relationships between that independent variable and the dependent ones to predict what the dependent variables will be. You then develop an equation that summarizes the effects of predictor variables.

To do this you will need aggregate data which is not always readily available and this model can be become overly complex the more factors are included as variables. Examples of the relationship between independent and dependent variables include: interest rates will impact on home loan applications, soil conditions will effect crop yields, location and size of land will effect sales levels. Techniques Linear regression, the objective is to develop an equation that summarizes the effects of the predictor (independent) variables upon the forecasted (dependent) variable. If the predictor variable were plotted, the object would be to obtain an equation of a straight line that minimizes the sum of the squared deviations from the line (with deviation being the distance from each point to the line). Where there is more than one predictor variable or if the relationship between predictor and forecast is not linear, simple linear regression wont be adequate.

For multiple predictors, multiple regression should be used, while non-linear relationships needs the use of curvilinear regression. Econometric forecasting Uses complex mathematical equations to show past relationships between demand and variables that influence the demand. An equation is derived and then tested and fine-tuned to ensure that it is as reliable a representation of the past relationship as possible. Once this is done, projected values of the influencing variables (income, prices, etc.

) are inserted into the equation to make a forecast. An example of this is the ARIMA model (autoregressive integrated moving-average). NB Box and Jenkins proposed a three stage methodology: model identification, estimation and validation. This involves identifying if the series is stationary or not and the presence of seasonality by examining plots of the series, autocorrelation and partial autocorrelation functions.

Then models are estimated using non-linear time series or maximum likelihood estimation procedures. Finally validation is carried out with diagnostic checking such as plotting the residuals to detect outliers and evidence of model fit. Evaluating Forecasts – determined by computing the bias, mean absolute deviation (MAD), mean square error (MSE), or mean absolute percent error (MAPE) for the forecast using different values for alpha. Bias is the sum of the forecast errors. These measures give more accuracy to the forecast of bias by taking into account the impact of over-forecasting and under-forecasting on the results. Choosing a method for different organisations/purposes No single technique works in every situation but the two most important factors are cost and accuracy. Other factors to consider are availability of historical data and the time & resources needed to gather and analyse the data as well as the timeline of the forecast – how far into the future you are trying to look.

Often an organisation can use several methods for different purposes. For example a charitable organisation might lack funds & technology but usually keep excellent records of their history and there is a multitude of readily accessible socio-economic data that can be applied to identify patterns and behaviours. They are also usually looking at predicting the situation for the next year or three years depending on their funding cycles and do not have months to spare while they determine variables. In this discussion of a state energy boards forecasting options (see link to pdf) they discuss the use of several methods depending on what they are trying to achieve.

Introduction ‘Time’ is the most important factor which ensures success in a business. It’s difficult to keep up with the pace of time. But, technology has developed some powerful methods using which we can ‘see things’ ahead of time. Don’t worry, I am not talking about Time Machine. Let’s be realistic here! I’m talking about the methods of prediction & forecasting. One such method, which deals with time based data is Time Series Modeling.

As the name suggests, it involves working on time (years, days, hours, minutes) based data, to derive hidden insights to make informed decision making. Time series models are very useful models when you have serially correlated data. Most of business houses work on time series data to analyze sales number for the next year, website traffic, competition position and much more.

However, it is also one of the areas, which many analysts do not understand. So, if you aren’t sure about complete process of time series modeling, this guide would introduce you to various levels of time series modeling and its related techniques. The following topics are covered in this tutorial as shown below: Table of Contents. Basics – Time Series Modeling.

Exploration of Time Series Data in R. Introduction to ARMA Time Series Modeling. Framework and Application of ARIMA Time Series Modeling Time to get started! Basics – Time Series Modeling Let’s begin from basics. This includes stationary series, random walks, Rho Coefficient, Dickey Fuller Test of Stationarity.

If these terms are already scaring you, don’t worry – they will become clear in a bit and I bet you will start enjoying the subject as I explain it. Stationary Series There are three basic criterion for a series to be classified as stationary series: 1. The mean of the series should not be a function of time rather should be a constant. The image below has the left hand graph satisfying the condition whereas the graph in red has a time dependent mean. The variance of the series should not a be a function of time. This property is known as homoscedasticity.

Following graph depicts what is and what is not a stationary series. (Notice the varying spread of distribution in the right hand graph) 3. The covariance of the i th term and the (i + m) th term should not be a function of time. In the following graph, you will notice the spread becomes closer as the time increases. Hence, the covariance is not constant with time for the ‘red series’. Why do I care about ‘stationarity’ of a time series?

The reason I took up this section first was that until unless your time series is stationary, you cannot build a time series model. In cases where the stationary criterion are violated, the first requisite becomes to stationarize the time series and then try stochastic models to predict this time series.

There are multiple ways of bringing this stationarity. Some of them are Detrending, Differencing etc. Random Walk This is the most basic concept of the time series.

You might know the concept well. But, I found many people in the industry who interprets random walk as a stationary process. In this section with the help of some mathematics, I will make this concept crystal clear for ever. Let’s take an example. Example: Imagine a girl moving randomly on a giant chess board. In this case, next position of the girl is only dependent on the last position.

(Source: ) Now imagine, you are sitting in another room and are not able to see the girl. You want to predict the position of the girl with time. How accurate will you be? Of course you will become more and more inaccurate as the position of the girl changes. At t=0 you exactly know where the girl is. Next time, she can only move to 8 squares and hence your probability dips to 1/8 instead of 1 and it keeps on going down.

Now let’s try to formulate this series: X(t) = X(t-1) + Er(t) where Er(t) is the error at time point t. This is the randomness the girl brings at every point in time.

Now, if we recursively fit in all the Xs, we will finally end up to the following equation: X(t) = X(0) + Sum(Er(1),Er(2),Er(3).Er(t)) Now, lets try validating our assumptions of stationary series on this random walk formulation: 1. Is the Mean constant? EX(t) = EX(0) + Sum(EEr(1),EEr(2),EEr(3).EEr(t)) We know that Expectation of any Error will be zero as it is random. Hence we get EX(t) = EX(0) = Constant.

Is the Variance constant? VarX(t) = VarX(0) + Sum(VarEr(1),VarEr(2),VarEr(3).VarEr(t)) VarX(t) = t. Var(Error) = Time dependent. Hence, we infer that the random walk is not a stationary process as it has a time variant variance. Also, if we check the covariance, we see that too is dependent on time.

Let’s spice up things a bit, We already know that a random walk is a non-stationary process. Let us introduce a new coefficient in the equation to see if we can make the formulation stationary. Introduced coefficient: Rho X(t) = Rho. X(t-1) + Er(t) Now, we will vary the value of Rho to see if we can make the series stationary. Here we will interpret the scatter visually and not do any test to check stationarity.

Let’s start with a perfectly stationary series with Rho = 0. Here is the plot for the time series: Increase the value of Rho to 0.5 gives us following graph: You might notice that our cycles have become broader but essentially there does not seem to be a serious violation of stationary assumptions. Let’s now take a more extreme case of Rho = 0.9 We still see that the X returns back from extreme values to zero after some intervals. This series also is not violating non-stationarity significantly. Now, let’s take a look at the random walk with rho = 1. This obviously is an violation to stationary conditions. What makes rho = 1 a special case which comes out badly in stationary test?

We will find the mathematical reason to this. Let’s take expectation on each side of the equation “X(t) = Rho. X(t-1) + Er(t)” EX(t) = Rho.E X(t-1) This equation is very insightful.

The next X (or at time point t) is being pulled down to Rho. Last value of X. For instance, if X(t – 1 ) = 1, EX(t) = 0.5 ( for Rho = 0.5). Now, if X moves to any direction from zero, it is pulled back to zero in next step.

The only component which can drive it even further is the error term. Error term is equally probable to go in either direction. What happens when the Rho becomes 1? No force can pull the X down in the next step. Dickey Fuller Test of Stationarity What you just learnt in the last section is formally known as Dickey Fuller test. Here is a small tweak which is made for our equation to convert it to a Dickey Fuller test: X(t) = Rho.

X(t-1) + Er(t) = X(t) - X(t-1) = (Rho - 1) X(t - 1) + Er(t) We have to test if Rho – 1 is significantly different than zero or not. If the null hypothesis gets rejected, we’ll get a stationary time series. Stationary testing and converting a series into a stationary series are the most critical processes in a time series modelling. You need to memorize each and every detail of this concept to move on to the next step of time series modelling. Let’s now consider an example to show you what a time series looks like. Exploration of Time Series Data in R Here we’ll learn to handle time series data on R. Our scope will be restricted to data exploring in a time series type of data set and not go to building time series models.

I have used an inbuilt data set of R called AirPassengers. The dataset consists of monthly totals of international airline passengers, 1949 to 1960. Loading the Data Set Following is the code which will help you load the data set and spill out a few top level metrics. cycle(AirPassengers) #This will print the cycle across years. plot(aggregate(AirPassengers,FUN=mean)) #This will aggregate the cycles and display a year on year trend boxplot(AirPassengerscycle(AirPassengers)) #Box plot across months will give us a sense on seasonal effect Important Inferences. The year on year trend clearly shows that the #passengers have been increasing without fail. The variance and the mean value in July and August is much higher than rest of the months.

Even though the mean value of each month is quite different their variance is small. Hence, we have strong seasonal effect with a cycle of 12 months or less.

Exploring data becomes most important in a time series model – without this exploration, you will not know whether a series is stationary or not. As in this case we already know many details about the kind of model we are looking out for. Let’s now take up a few time series models and their characteristics. We will also take this problem forward and make a few predictions. Introduction to ARMA Time Series Modeling ARMA models are commonly used in time series modeling. In ARMA model, AR stands for auto-regression and MA stands for moving average.

If these words sound intimidating to you, worry not – I’ll simplify these concepts in next few minutes for you! We will now develop a knack for these terms and understand the characteristics associated with these models. But before we start, you should remember, AR or MA are not applicable on non-stationary series.

In case you get a non stationary series, you first need to stationarize the series (by taking difference / transformation) and then choose from the available time series models. First, I’ll explain each of these two models (AR & MA) individually. Next, we will look at the characteristics of these models. Auto-Regressive Time Series Model Let’s understanding AR models using the case below: The current GDP of a country say x(t) is dependent on the last year’s GDP i.e. The hypothesis being that the total cost of production of products & services in a country in a fiscal year (known as GDP) is dependent on the set up of manufacturing plants / services in the previous year and the newly set up industries / plants / services in the current year.

But the primary component of the GDP is the former one. Hence, we can formally write the equation of GDP as: x(t) = alpha.

x(t – 1) + error (t) This equation is known as AR(1) formulation. The numeral one (1) denotes that the next instance is solely dependent on the previous instance.

The alpha is a coefficient which we seek so as to minimize the error function. Notice that x(t- 1) is indeed linked to x(t-2) in the same fashion. Hence, any shock to x(t) will gradually fade off in future. For instance, let’s say x(t) is the number of juice bottles sold in a city on a particular day. During winters, very few vendors purchased juice bottles. Suddenly, on a particular day, the temperature rose and the demand of juice bottles soared to 1000.

However, after a few days, the climate became cold again. But, knowing that the people got used to drinking juice during the hot days, there were 50% of the people still drinking juice during the cold days. In following days, the proportion went down to 25% (50% of 50%) and then gradually to a small number after significant number of days. The following graph explains the inertia property of AR series: Moving Average Time Series Model Let’s take another case to understand Moving average time series model. A manufacturer produces a certain type of bag, which was readily available in the market. Being a competitive market, the sale of the bag stood at zero for many days. So, one day he did some experiment with the design and produced a different type of bag.

This type of bag was not available anywhere in the market. Thus, he was able to sell the entire stock of 1000 bags (lets call this as x(t) ). The demand got so high that the bag ran out of stock. As a result, some 100 odd customers couldn’t purchase this bag. Lets call this gap as the error at that time point. Download app for mac free. With time, the bag had lost its woo factor. But still few customers were left who went empty handed the previous day.

Following is a simple formulation to depict the scenario: x(t) = beta. error(t-1) + error (t) If we try plotting this graph, it will look something like this: Did you notice the difference between MA and AR model? In MA model, noise / shock quickly vanishes with time. The AR model has a much lasting effect of the shock. Difference between AR and MA models The primary difference between an AR and MA model is based on the correlation between time series objects at different time points.

The correlation between x(t) and x(t-n) for n order of MA is always zero. This directly flows from the fact that covariance between x(t) and x(t-n) is zero for MA models (something which we refer from the example taken in the previous section). However, the correlation of x(t) and x(t-n) gradually declines with n becoming larger in the AR model.

This difference gets exploited irrespective of having the AR model or MA model. The correlation plot can give us the order of MA model. Exploiting ACF and PACF plots Once we have got the stationary time series, we must answer two primary questions: Q1. Is it an AR or MA process? What order of AR or MA process do we need to use?

The trick to solve these questions is available in the previous section. Didn’t you notice? The first question can be answered using Total Correlation Chart (also known as Auto – correlation Function / ACF). ACF is a plot of total correlation between different lag functions. For instance, in GDP problem, the GDP at time point t is x(t). We are interested in the correlation of x(t) with x(t-1), x(t-2) and so on.

Now let’s reflect on what we have learnt above. In a moving average series of lag n, we will not get any correlation between x(t) and x(t – n -1). Hence, the total correlation chart cuts off at nth lag. So it becomes simple to find the lag for a MA series. For an AR series this correlation will gradually go down without any cut off value. So what do we do if it is an AR series? Here is the second trick.

If we find out the partial correlation of each lag, it will cut off after the degree of AR series. For instance,if we have a AR(1) series, if we exclude the effect of 1st lag (x (t-1) ), our 2nd lag (x (t-2) ) is independent of x(t). Hence, the partial correlation function (PACF) will drop sharply after the 1st lag. Following are the examples which will clarify any doubts you have on this concept: ACF PACF The blue line above shows significantly different values than zero.

Clearly, the graph above has a cut off on PACF curve after 2nd lag which means this is mostly an AR(2) process. ACF P ACF Clearly, the graph above has a cut off on ACF curve after 2nd lag which means this is mostly a MA(2) process. Till now, we have covered on how to identify the type of stationary series using ACF & PACF plots. Now, I’ll introduce you to a comprehensive framework to build a time series model. In addition, we’ll also discuss about the practical applications of time series modelling. Framework and Application of ARIMA Time Series Modeling A quick revision, Till here we’ve learnt basics of time series modeling, time series in R and ARMA modeling.

Now is the time to join these pieces and make an interesting story. Overview of the Framework This framework(shown below) specifies the step by step approach on ‘ How to do a Time Series Analysis‘: As you would be aware, the first three steps have already been discussed above. Nevertheless, the same has been delineated briefly below: Step 1: Visualize the Time Series It is essential to analyze the trends prior to building any kind of time series model.

The details we are interested in pertains to any kind of trend, seasonality or random behaviour in the series. We have covered this part in the second part of this series. Step 2: Stationarize the Series Once we know the patterns, trends, cycles and seasonality, we can check if the series is stationary or not. Dickey – Fuller is one of the popular test to check the same.

We have covered this test in the of this article series. This doesn’t ends here! What if the series is found to be non-stationary? There are three commonly used technique to make a time series stationary: 1. Detrending: Here, we simply remove the trend component from the time series. For instance, the equation of my time series is: x(t) = (mean + trend. t) + error We’ll simply remove the part in the parentheses and build model for the rest.

Differencing: This is the commonly used technique to remove non-stationarity. Here we try to model the differences of the terms and not the actual term. For instance, x(t) – x(t-1) = ARMA (p, q) This differencing is called as the Integration part in AR(I)MA. Now, we have three parameters p: AR d: I q: MA 3. Seasonality: Seasonality can easily be incorporated in the ARIMA model directly. More on this has been discussed in the applications part below. Step 3: Find Optimal Parameters The parameters p,d,q can be found using.

An addition to this approach is can be, if both ACF and PACF decreases gradually, it indicates that we need to make the time series stationary and introduce a value to “d”. Step 4: Build ARIMA Model With the parameters in hand, we can now try to build ARIMA model. The value found in the previous section might be an approximate estimate and we need to explore more (p,d,q) combinations. The one with the lowest BIC and AIC should be our choice. We can also try some models with a seasonal component. Just in case, we notice any seasonality in ACF/PACF plots. Step 5: Make Predictions Once we have the final ARIMA model, we are now ready to make predictions on the future time points.

We can also visualize the trends to cross validate if the model works fine. Applications of Time Series Model Now, we’ll use the same example that we have used above. Then, using time series, we’ll make future predictions. We recommend you to check out the example before proceeding further. Where did we start?

Following is the plot of the number of passengers with years. Try and make observations on this plot before moving further in the article. Here are my observations: 1. There is a trend component which grows the passenger year by year.

There looks to be a seasonal component which has a cycle less than 12 months. The variance in the data keeps on increasing with time.

We know that we need to address two issues before we test stationary series. One, we need to remove unequal variances. We do this using log of the series. Two, we need to address the trend component. We do this by taking difference of the series. Now, let’s test the resultant series.

Adf.test(diff(log(AirPassengers)), alternative='stationary', k=0) Augmented Dickey-Fuller Test data: diff(log(AirPassengers)) Dickey-Fuller = -9.6003, Lag order = 0, p-value = 0.01 alternative hypothesis: stationary We see that the series is stationary enough to do any kind of time series modelling. Next step is to find the right parameters to be used in the ARIMA model. We already know that the ‘d’ component is 1 as we need 1 difference to make the series stationary. We do this using the Correlation plots. Following are the ACF plots for the series.

Acf(diff(log(AirPassengers))) pacf(diff(log(AirPassengers))) Clearly, ACF plot cuts off after the first lag. Hence, we understood that value of p should be 0 as the ACF is the curve getting a cut off. While value of q should be 1 or 2. After a few iterations, we found that (0,1,1) as (p,d,q) comes out to be the combination with least AIC and BIC. Let’s fit an ARIMA model and predict the future 10 years. Also, we will try fitting in a seasonal component in the ARIMA formulation.

Then, we will visualize the prediction along with the training data. You can use the following code to do the same. Tavish is an IIT post graduate, a results-driven analytics professional and a motivated leader with 7+ years of experience in data science industry.

He has led various high performing data scientists teams in financial domain. His work range from creating high level business strategy for customer engagement and acquisition to developing Next-Gen cognitive Deep/Machine Learning capabilities aligned to these high level strategies for multiple domains including Retail Banking, Credit Cards and Insurance. Tavish is fascinated by the idea of artificial intelligence inspired by human intelligence and enjoys every discussion, theory or even movie related to this idea. Hi I am a medical specialist (MD Pediatrics) with further training in research and statistics (Panjab University, Chandigarh). In our medical settings, time series data are often seen in ICU and anesthesia related research where patients are continuously monitored for days or even weeks generating such data.

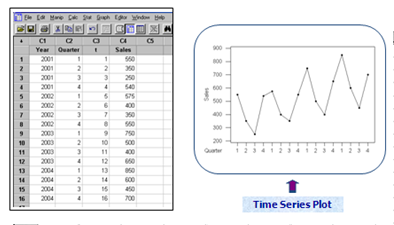

Naive Model Time Series 2

Frankly speaking, your article has clearly decoded this arcane process of time series analysis with quite wonderful insight into its practical relevance. Fabulous article Mr Tavish, kindly write more about ARIMA modelling. Thanks a lot Dr Sahul Bharti.

First off all, congratulations on your work around here. It’s been very useful.

Thank you I a doubt and i hope that you can help me I performed a Dickey-Fuller test on both series; AirPassengers and diff( log(AirPassengers)) Here the results: Augmented Dickey-Fuller Test data: diff(log(AirPassengers)) Dickey-Fuller = -9.6003, Lag order = 0, p-value = 0.01 alternative hypothesis: stationary and Augmented Dickey-Fuller Test data: diff(log(AirPassengers)) Dickey-Fuller = -9.6003, Lag order = 0, p-value = 0.01 alternative hypothesis: stationary In both tests i got a small p-value that allows me to reject the non stationary hypothesis. If so, the first series is already stationary?? This means that if i had performed a stationary test on the original series had move on to the next step. Thank you in advance. @Hugo, Yes, the adf.test(AirPassengers) indicates that the series is stationary.

This is a bit misleading. Reason: This test first does a de-trend on the series, (ie., removes the trend component), then checks for stationarity. Hence it flags the series as stationary. There is another test in package fUnitRoots. Please try this code: ## Start install.packages(“fUnitRoots”) # If you already have installed this package, you can omit this line library(fUnitRoots); adfTest(AirPassengers); adfTest(log(AirPassengers)); adfTest(diff(AirPassengers)); ## End Hope this helps. Hi, After you run this pred. Hey Amy, ts.plot will plot several time series on the same plot.

The first two entries are the two time series he’s plotting. The last two entries are nice visual parameters (we’ll come back to that). Clearly, this plots the AirPassengers time series in a dark, continuous line. The second entry is also a time series, but it is a little more confusing: ” 2.718^pred$pred”. First, you have to know what pred$pred is. The function predict here is a generic function that will work differently for different classes plugged into it (it says so if you type?predict). The class we’re working with is an Arima class.

If you type?predict.Arima you will find a good description of what the function is all about. Predict.Arima spits out something with a “pred” part (for predict) and a “se” part (for standard error). We want the “pred” part, hence pred$pred. So, pred$pred is a time series. Now, 2.718^pred$pred is also. You have to remember that 2.718 is approximately the constant e, and then this makes sense. He’s just undoing the log that he placed on the data when he created “fit”.

As for the last two parameters, log = “y” sets the y-axis to be on a log scale. And finally, lty = c(1,3) will set the LineTYpe to 1 (for solid) for the original time series and 3 (for dotted) for the predicted time series. Hey Tavish, really enjoyed the content, Just a small doubt: Can you please ebaorate the covariance in stationary terms.

I understand the covariance term, but here in time series,it is not coming to my mind. Can you please help me understand the third condition of stationary series i.e “The covariance of the i th term and the (i + m) th term should not be a function of time.” Please help me understand from data perspective e.g if i have sales data for each date.

How can you explain convariance in real life example with daily sales data. Hello, the data you used in your tutorial, AirPassengers, is already a time series object. My question is, HOW can i make/prepare my own time series object? I currently have a historical currency exchange data set, with first column being date, and the rest 20 columns are titled by country, and their values are the exchange rate. After i convert my date column into date object, when i use the same commands used in your tutorial, the results are funny. For example, start(data$Date) will give me a result of: 1 1 1 and frequency(data$Date) will return: 1 1 can you please explain HOW to prepare our data accordingly so we can use the functions? Hi Kevin, ACF plot is a bar chart of the coefficients of correlation between a time series and lags of itself.

PACF plot is a plot of the partial correlation coefficients between the series and lags of itself. To find p and q you need to look at ACF and PACF plots. The interpretation of ACF and PACF plots to find p and q are as follows: AR (p) model: If ACF plot tails off. but PACF plot cut off. after p lags MA(q) model: If PACF plot tails off but ACF plot cut off after q lags ARMA(p,q) model: If both ACF and PACF plot tail off, you can choose different combinations of p and q, smaller p and q are tried.

ARIMA(p,d,q) model: If it’s ARMA with d times differencing to make time series stationary. Use AIC and BIC to find the most appropriate model. Lower values of AIC and BIC are desirable.Tails of mean slow decaying of the plot, i.e. Plot has significant spikes at higher lags too.Cut off means the bar is significant at lag p and not significant at any higher order lags. Here is a link that might help you understand the concept further Hope this helps.

Introduction Most of us would have heard about the new buzz in the market i.e. Many of us would have invested in their coins too.

But, is investing money in such a volatile currency safe? How can we make sure that investing in these coins now would surely generate a healthy profit in the future? We can’t be sure but we can surely generate an approximate value based on the previous prices. Time series models is one way to predict them. Source: Bitcoin Besides Crypto Currencies, there are multiple important areas where time series forecasting is used for example: forecasting Sales, Call Volume in a Call Center, Solar activity, Ocean tides, Stock market behaviour, and many others.

Assume the Manager of a hotel wants to predict how many visitors should he expect next year to accordingly adjust the hotel’s inventories and make a reasonable guess of the hotel’s revenue. Based on the data of the previous years/months/days, (S)he can use time series forecasting and get an approximate value of the visitors.

Forecasted value of visitors will help the hotel to manage the resources and plan things accordingly. In this article, we will learn about multiple forecasting techniques and compare them by implementing on a. We will go through different techniques and see how to use these methods to improve score. Let’s get started!

Table of Contents. Understanding the Problem Statement and Dataset. Installing library (statsmodels). Method 1 – Start with a Naive Approach.

Method 2 – Simple average. Method 3 – Moving average. Method 4 – Single Exponential smoothing. Method 5 – Holt’s linear trend method. Method 6 – Holt’s Winter seasonal method.

Method 7 – ARIMA Understanding the Problem Statement and Dataset We are provided with a Time Series problem involving prediction of number of commuters of JetRail, a new high speed rail service by Unicorn Investors. We are provided with 2 years of data(Aug 2012-Sept 2014) and using this data we have to forecast the number of commuters for next 7 months. Let’s start working on the dataset downloaded from the above link. In this article, I’m working with train dataset only. Import pandas as pd import numpy as np import matplotlib.pyplot as plt #Importing data df = pd.readcsv('train.csv') #Printing head df.head #Printing tail df.tail As seen from the print statements above, we are given 2 years of data(2012-2014) at hourly level with the number of commuters travelling and we need to estimate the number of commuters for future.

In this article, I’m subsetting and aggregating dataset at daily basis to explain the different methods. Subsetting the dataset from (August 2012 – Dec 2013).

Naive Model Time Series 2016

Creating train and test file for modeling. The first 14 months (August 2012 – October 2013) are used as training data and next 2 months (Nov 2013 – Dec 2013) as testing data. Hello Gurchetan, Thks for your interesting article. Even though I use R, I think the question is interesting for any user of Time series regarding of the tool used.

I implemented for a client a Time Series using Holt -Winters. Everything was fine, but because my client is not an IT or stats proficient guy I needed to provide among the implementation some kind of algorythm that could calculate for him the 3 coeffcients used in the Holt Winters method. My first solution was very simple just generate a random set of numbers and the one that has the least SSE is the one the system would use to forecast the Timeseries. At this moment is working fine but I would like to optimize it using for example Nelder-Mead. Because the environment is not R pure, not all the libraries are recognized meaning that I would have to code the whole Nelder-Mead function. My question is acording to your experience is worth the effort? Would my algorythm would gain performance and/or accuracy?

Thks a lot for your time. Hello Gurchetan. First of all my apologies. I never receive your email reply so I left it unanswered. I discovered today, a few months later that you actually answered me. And you were right!! I found a Nelder – Mead Function, put it to test, later I compared the optimization that exists in the Holt Winter function and the results were the same, with the difference that the optimization embedded in the function were helluva faster.

The only thing that made me feel umconfortable was the use of SSE as a solely acuracy variable. So I had to invent myself a MAPE function in order to feel more confortable with the results I was achieving.

Thks for your response and my apologies for my delayed follow up. Hi Team, the aggregation section, you have mentioned the following #Aggregating the dataset at daily level df.Timestamp = pd.todatetime(df.Datetime,format=’%d-%m-%Y%H:%M’) df.index = df.Timestamp df = df.resample(‘D’).mean If you are aggregating the data from Hours to Days. Then why do you consider / take the mean of resample.

I think it should be resample of “Addition”. I.e df= df.resample(‘D’).sum Hourly commuters are added up for a Day. So on the Day you will have a cumilative number of commuters. Please correct me if i am wrong. Hi, I got a warning about creating a new column to the dataframe by using dot operator. This is the line of code: df.Timestamp = pd.todatetime(df.Datetime,format=’%d-%m-%Y%H:%M’) But this created the column. When I replaced the dot operator with square brackets, there was no warning.

Here is my code: df“Timestamp” = pd.todatetime(df.Datetime,format=’%d-%m-%Y%H:%M’) I am using windows 7, running notebook on Pycharm with python 3.6. Can you please let me know why system is throwing the warning, Is it because I used a different python version? Or is it a recent change to pandas where they don’t allow to create new column through dot operator.